Overview

Implemented and evaluated a collaborative filtering system using latent factor matrix factorization to predict user ratings for video games.

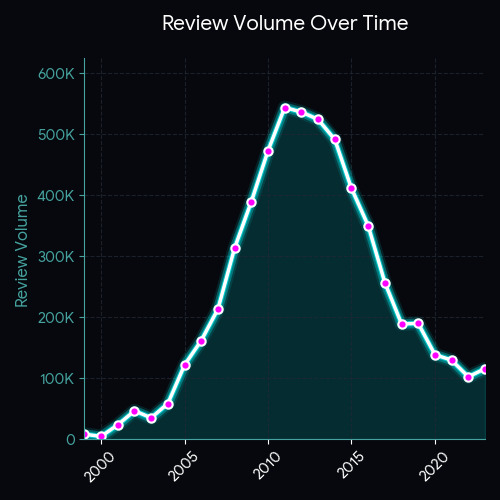

The model was trained and evaluated on a large-scale dataset containing 4.6 million ratings across 2.8 million users and 137K items, requiring careful handling of sparsity and generalization.

Video Presentation

Approach

- Implemented SVD-based latent factor decomposition

- Optimized user and item embeddings using stochastic gradient descent

- Applied L2 regularization to mitigate overfitting

- Tuned hyperparameters including embedding dimension, learning rate, and regularization strength

- Benchmarked against multiple baseline models:

- Global mean predictor

- User bias model

- Item bias model

- Pearson similarity

- Jaccard similarity

- Time-decay similarity variants

Results

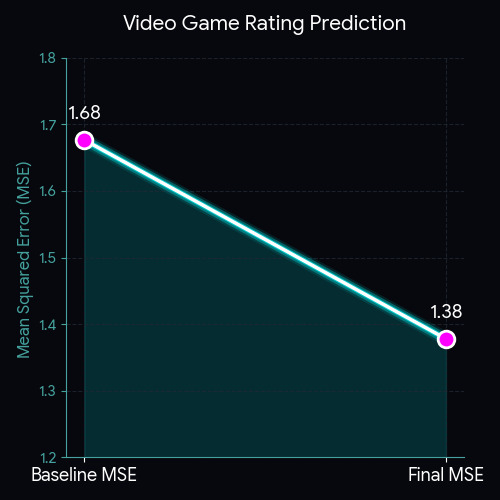

The SVD latent factor model achieved:

- Test MSE: 1.3779

- Global Mean Baseline MSE: 1.6768

- ~17–18% reduction in prediction error compared to baseline

Latent factor modeling consistently outperformed similarity-based and bias-only approaches on both validation and test sets.

Teamwork & Collaboration

This project was completed in a team of four engineers.

I led the team by organizing task distribution, setting internal milestones, and ensuring alignment with project deadlines. We followed lightweight Agile practices, including:

- Bi-weekly standup meetings to track progress and resolve blockers

- Iterative development with incremental model improvements

- Clear division of responsibilities (data preprocessing, modeling, evaluation, benchmarking)

- Shared Git-based workflow for collaboration and version control

I coordinated model experimentation efforts and aligned the team on evaluation methodology to ensure consistent and fair comparison across all models.

What I Learned

- Why matrix factorization scales better than memory-based similarity models

- The impact of regularization in high-sparsity datasets

- How hyperparameter tuning meaningfully affects generalization performance

- How to design reproducible evaluation pipelines for recommender systems

- How to lead a small engineering team using Agile principles to deliver a complete, well-evaluated system on schedule